Is this the death of big data?

Published on 9月 8, 2020

Ferran Torrent

Senior Data Scientist at Aimsun

The era of big data is ending. This might seem like a crazy thing to say at a time when machine learning, deep learning and data science are being integrated in ever more businesses and institutions. But the truth is that the AI community is already moving beyond big data.

It is undeniable that big data gave great momentum to AI research: big datasets and advances in computation allowed us to grow from models fed with structured data to models fed with unstructured data, which opened new doors to deep learning. Research in deep learning brought new architectures of recurrent neural networks, convolutional neural networks, and graph neural networks, which in turn permitted the development of models capable of reaching (and surpassing) human performance.

But then deep learning started to get stuck. The solution to solving new complex problems is to build large models with billions of parameters. These models are useful as spearheads and for research purposes, but they are not practical for most applications. One of the main successes of deep learning was the ability to train and run models on ordinary computers, so, clearly, we either need to speed up our everyday computers or make deep learning more efficient.

One of the trends for tackling this inefficiency is to take a data-centric approach. The traditional, model-centric approach commonly used by data scientists and engineers is to take a dataset, decide on a type of model that fits the characteristics of the problem and the dataset, and then use the dataset to train and tune the model. There are frequently various types of models that could achieve a similar performance, or none can meet the requirements with the available dataset. Conversely, a data-centric approach focuses on tuning the dataset to improve the performance of the final model. It mainly improves the efficiency of the dataset but indirectly it also improves the efficiency of the machine learning or deep learning model. This procedure can be extremely useful in contexts where the available datasets are small, or it is difficult to gather new data.

Another trend is to increase computational power. This hasn’t been a problem over the last few decades, on the contrary, Moore’s law has been fulfilled, providing a huge increase in computational power year after year. But Moore’s law seems to be petering out, and other approaches have arisen. Google improved the efficiency of linear algebra calculations, the base of deep learning, with the tensor processing unit, and even used deep learning for improving its design. The use of photonics is also being explored. Incentivized by the fact that optical fibers can support higher data rates with less power than electrical wires, researchers try to compute with data instead of only communicating data. But modern computers are made of transistors that work in the non-linear region to emulate logic gates. Combining logic gates, computers can replicate summation, multiplication, etc. The problem is that photonics stubbornly follows the linear Maxwell equations. So, there are no non-linearities to emulate logic gates or transistors. But what if photonics is used for linear algebra? Then, no non-linearity is needed, and the main burden of deep learning can be carried out with photonics. Under this hypothesis, photonics is expected to help in increasing computation power. Quantum computing is another bet to bring a completely new context in computation power specially, the one related to solving optimization problems. Training a deep learning model is basically an optimization problem that consists of finding combination of parameters, so the model achieves the best performance in a particular task.

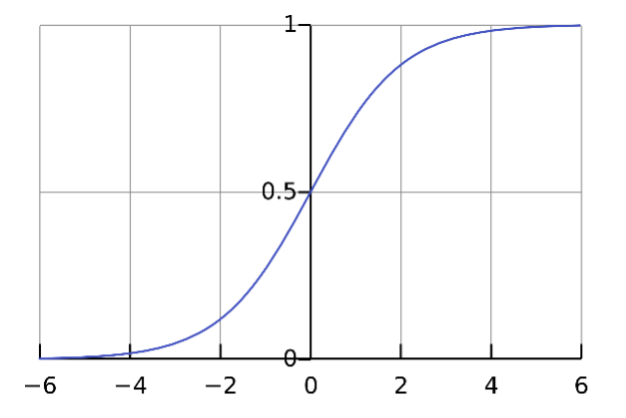

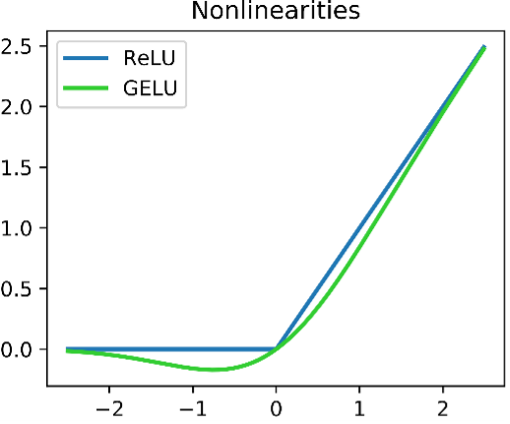

Biology is also a source of new questions: for example, if a tiny dragonfly can accurately estimate the trajectory of a pray and correct its trajectory by sending the right orders to the wings and tail in milliseconds with a very small brain, why there is no computer with the same accuracy? Simple but deep questions such this one incentivizes research in neuroscience and AI that is expected to bring new ideas, new ways of assembling and organizing neural networks, and new operations, that will aid more efficient deep learning or machine learning and lead to a more general AI. A small improvement can spark a great advance, for example: in the 90s, neural networks used as non-linear function the sigmoid. For years, researchers sought a non-linear function with smooth derivatives to help backpropagation, the algorithm that updates neural network parameters using the derivates. But suddenly the ReLU (Rectified Linear Unit) activation function was proposed, and despite some initial skepticism, it worked incredibly well and solved one the main problems of squashing functions such as sigmoid, the gradient vanishing problem. ReLU was one of the sparks that made convolutional neural networks work as it allowed several layers to be stacked, and so developing neural networks able to solve more complex computer vision problems.

One of the ideas that are more actively sought permit deep learning to generalize better. It is well-known that a deep learning model can excel in a particular task, but when it tries to solve a similar but different task, it fails. For example, if we train a neural network to recognize traffic objects or signals, we cannot ask it to recognize the color of a traffic light. And if we update the model with data of traffic lights with different colors, the model forgets everything it learned about traffic objects or traffic signals, and it is as starting from scratch, or even worse, it starts from a previous solution (model) that may end up with another solution (another model) that is worse than if it started from scratch. We learned to freeze some layers and only update the latest layers to reutilize part of previously trained models. While this mitigates the problem it doesn’t solve it, we reuse “knowledge” encoded in the frozen layers, but the updated layers also suffer from forgetfulness. If we want a model that continuously learns but remembers important things, or things that are common to two problems, or very important for the previous learnt problems, we must solve this. An example of the work on this field is the Deep Mind paper that proposes a way to identify which parameters are important for a first learnt problem, and a way to incentivize updating non-important weights, instead of the important ones, when learning a new problem.

We have hit the ceiling of deep learning combined with big data and somehow, we need to find a way to break through and start a new AI paradigm. In the past, when AI hit a ceiling, it suffered two long winters. However, now we can be optimistic about fresh advances that will open new doors in the next few years, and even if these new advances do not come as soon as we’d like, there is a lot of work to do in generalizing current AI, deploying it in more fields and solving new problems.

The answer to the title is no. Big data is here to stay. It is needed to support new progress, but it won’t be the cornerstone.